The Hidden Foundation of AI

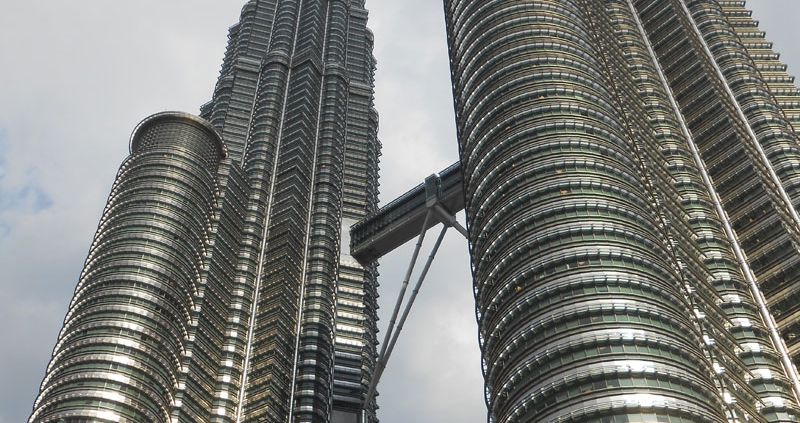

If you went to Malaysia and flipped the Petronas Towers upside down, you’d find something surprising: its foundation is more than a quarter of its visible height. No other megastructure’s underpinnings even come close. And this very expensive design decision wasn’t made out of an abundance of caution. Engineers were dealing with some of the most unpredictable soil conditions in the world; sinking (literally) all that money into the foundation was an architectural necessity. In other words, it was the price of success.

Many of today’s sky-high AI projects lack a firm foundation. Pilots and proofs of concept are great but they’re just artistic drawings. Scalable, enterprise-grade AI has to be anchored. Otherwise, even the best ideas will have a hard time getting off the ground. Your technical and data foundation has to be flexible and resilient – an infrastructure capable of evolving with tomorrow’s unknowns. Do it right and the sky’s the limit.

Flexible, scalable, resilient, evolving, adaptable, future-proofed—in a way these are all synonyms. You get the idea, so let’s dig into the details. You want to invest in modern platforms that act as structural supports across any AI lifecycle:

- Data lakehouses and streaming platforms for capturing transactional and IoT sensor data

- Intelligence layers for making meaning out of event-based data in real time

- Integrated environments for developing, testing, and deploying systems

- Cloud infrastructure that provides flexible, cost-effective compute

The Data Lakehouse and Streaming Platforms

The foundation of any AI project will only ever be as good as the data it’s built on. Organizations must establish a centralized data repository that can store both structured and unstructured data at scale.

Structured data from transactional systems, operational platforms, and financial systems remains essential. But today unstructured data – documents, logs, images, conversations, and sensor streams – represents the majority of the enterprise’s information footprint. AI systems depend on both.

The Intelligence Layer

Perhaps the most important architectural shift underway is the move to event-based data systems. When a business event occurs – whether it’s a customer interaction, operational disruption, or system signal – AI models should be able to immediately provide insight or automate action. In traditional architectures, systems are connected through tightly controlled pipelines, each built for a specific purpose. That approach does not scale well in an AI-driven enterprise.

This is where a semantic or knowledge graph layer becomes critical, by providing context that helps AI systems understand relationships between customers, operations, assets, events, and processes. Without this layer, AI models operate in fragments. With it, they have situational awareness.

Event-driven architectures create what is effectively a data “super highway,” where producers and consumers of data can connect through shared streams rather than individual integrations. Business events of all kinds flow continuously across the enterprise. The result is a true ecosystem rather than a collection of pipelines.

AI thrives on timeliness. The closer insight is to the moment of decision, the greater the business value.

Integrated Environments

Building spaces where models can be not only developed but also tested and deployed is critical. But this integrated approach is more complex than it sounds, and it isn’t one size fits all.

For traditional machine learning, this kind of integration includes:

- feature engineering environments that allow teams to create and refine predictive signals

- feature stores that ensure consistency between training and production

- scalable training infrastructure

- robust CI/CD pipelines to automate testing, deployment, and monitoring

For generative and agentic AI, the requirements expand:

- environments specifically designed for prompt engineering, context testing, and evaluation of system behavior

- these systems must also integrate with CI/CD pipelines so that changes to prompts, orchestration logic, or model configurations can be deployed safely and consistently.

In both cases, automation and repeatability are key. AI cannot scale if every workflow is handcrafted.

Why the Cloud Is Non-Negotiable

The economics of AI make using the cloud imperative. Scalable and cost-effective compute requires cloud infrastructure and will do so for the foreseeable future (barring a technological quantum leap).

Machine learning workloads often require enormous bursts of compute during training but relatively modest resources during runtime. Generative and agentic AI workloads may actually invert that pattern – especially when large language models are involved – requiring sustained inference capacity.

Cloud infrastructure allows organizations to dynamically match resources to demand rather than overbuilding fixed infrastructure that sits idle much of the time. Will the emergence of small language models change this calculus? Only time will tell.

Final Thoughts

In the end, the companies that win with AI won’t be those with the most algorithms. They’ll be the ones with the strongest foundations. Successfully scaling AI has little to do with improving models. It’s about building the environment in which better models can thrive.

Organizations that invest in modern platforms position themselves to move beyond experimentation. They create the conditions for AI to become embedded in the fabric of the business.

From the ground up.